Transfer learning is not just a shortcut to faster AI development—it is a strategic method for reducing data risk, cost, and time by leveraging knowledge from pre-trained models and adapting it intelligently to new problems.

Many beginners believe machine learning requires massive datasets and expensive GPU training. That assumption stops projects before they start. The truth is simple: in most practical business scenarios, you should not train from scratch. Instead, you adapt an existing model.

Transfer learning allows you to take a model trained on a large dataset and fine-tune it for your specific problem. It saves time, reduces cost, and often improves accuracy—if applied correctly.

Table of Contents

Key Takeaways

- Transfer learning reuses knowledge from pre-trained models to solve new tasks efficiently.

- It reduces data requirements but introduces domain-mismatch risks.

- It works best when source and target domains are closely related.

- Fine-tuning strategy determines performance more than model size.

- It is a cost-control strategy, not just a technical method.

What Is Transfer Learning?

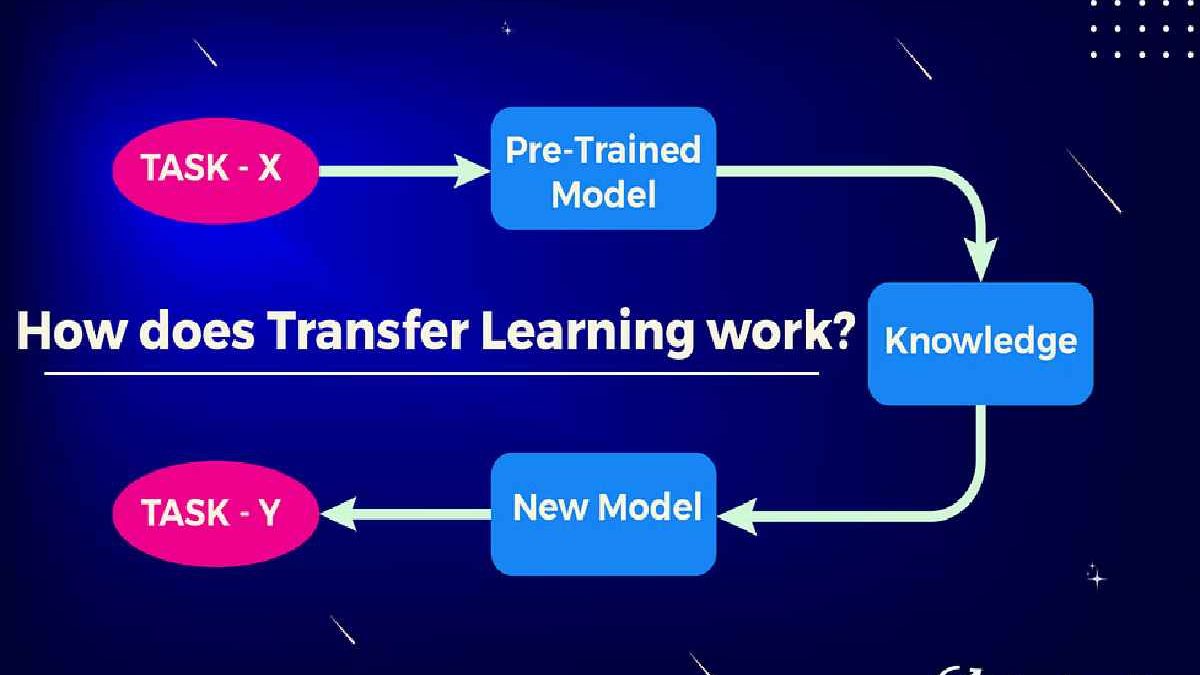

Transfer learning is a machine learning method where a model developed for one task is reused as the starting point for a related task.

Instead of building everything from zero, you leverage pre-trained architectures such as:

- BERT (Google AI)

- ResNet (Microsoft Research)

- GPT architectures (OpenAI)

For example:

- A model trained on millions of images can be fine-tuned to classify medical scans.

- A language model trained on web data can be adapted for legal document analysis.

Research from institutions like Stanford and Google AI shows that deep networks learn general features in early layers (edges, grammar patterns) and task-specific patterns in later layers. Transfer learning reuses the general layers.

Why Transfer Learning Matters in 2026

AI training costs are rising. Large model training can cost thousands to millions of dollars in compute resources.

Transfer learning solves three major problems:

- Data scarcity

- Budget constraints

- Speed to deployment

For startups and SMEs, this is critical. Instead of months of model training, deployment can happen in weeks.

Industries using it heavily:

- Healthcare diagnostics

- E-commerce recommendation systems

- Financial fraud detection

- Beauty & skincare product recommendation engines

Types of Transfer Learning

1. Inductive Transfer Learning

Target task differs from source task. Most common scenario.

2. Transductive Transfer Learning

Same task, different domain.

3. Unsupervised Transfer Learning

Knowledge transfer without labeled target data.

When Should You Use Transfer Learning?

Use this checklist:

- Your dataset is under 100k samples.

- A high-quality pre-trained model exists.

- Source and target domains share similarity.

- You need faster time to market.

Decision Matrix

| Scenario | Train from Scratch | Transfer Learning |

|---|---|---|

| Small dataset | ❌ Risky | ✅ Ideal |

| High compute budget | ✅ Possible | ✅ Still efficient |

| Domain mismatch | ⚠️ Better | ❌ Risky |

| Fast launch needed | ❌ Slow | ✅ Fast |

When You Should NOT Use It

- If you own a massive proprietary dataset.

- If regulatory bias risks are high (e.g., medical or legal AI).

- If your domain differs completely (e.g., wildlife model used for satellite weather).

Transfer learning inherits biases from the original model. That risk must be evaluated carefully.

Implementation Framework

- Select a pre-trained model (TensorFlow Hub, PyTorch Hub).

- Freeze base layers initially.

- Replace final classification layer.

- Train on target dataset.

- Gradually unfreeze layers if needed.

- Evaluate carefully for bias and overfitting.

Industry Comparison Tables

Pricing by Country

| Country | Avg AI Dev Cost (Monthly) | AI Adoption Level | Primary Industry |

|---|---|---|---|

| USA | High | Very High | SaaS |

| UK | Medium-High | High | FinTech |

| India | Medium | Rapid Growth | IT Services |

| Germany | High | High | Manufacturing |

| Singapore | Medium-High | Advanced | Finance |

Major Brands Comparison

| Brand | Specialty | Strength | Limitation | Pricing Level |

|---|---|---|---|---|

| OpenAI | NLP | Strong language models | API dependency | Premium |

| Google AI | Vision/NLP | Research depth | Complex ecosystem | High |

| Microsoft Azure AI | Enterprise AI | Integration | Enterprise cost | High |

| AWS AI | Cloud ML | Scalability | Setup complexity | Medium-High |

| IBM Watson | Enterprise AI | Business tools | Less cutting-edge | Medium |

Beauty Niche: AI Personalization Pricing Trend

Yearly pricing trend (qualitative):

2019 → Moderate

2020 → Rising

2021 → High growth

2022 → Stabilized

2023–2026 → Competitive optimization

AI helps skincare brands personalize recommendations via transfer learning models trained on image and skin-type data.

Crypto Niche Example

How to Exchange

- Use regulated exchanges.

- Verify identity.

- Transfer funds.

- Trade tokens.

History

Crypto AI trading models evolved from rule-based systems to deep learning.

Geographic Adoption

| Country | Crypto Adoption |

|---|---|

| USA | High |

| India | Rapid Growth |

| Brazil | Emerging |

| UK | Moderate |

| UAE | High |

Value Comparison

Exchange fees vary significantly by region due to regulation and liquidity.

Risks and Limitations

- Bias inheritance

- Domain mismatch

- Overfitting on small datasets

- Regulatory challenges (especially in EU AI Act context)

Transfer learning is powerful—but not magic.

Final Strategic Perspective

Transfer learning should be seen as a strategic accelerator, not a technical shortcut. When applied with domain alignment, bias control, and cost awareness, it dramatically reduces AI deployment risk.

Beginners should use it to learn efficiently. Professionals should use it to control cost and scale intelligently.

FAQS

1. Is transfer learning better than training from scratch?

Yes, in most small to medium dataset scenarios. It reduces cost and improves speed, but domain similarity matters.

2. Does transfer learning reduce data needs?

Yes. It significantly reduces required labeled data because base knowledge is reused.

3. Can transfer learning introduce bias?

Yes. Models inherit bias from original training data. Always validate carefully.

4. Is transfer learning expensive?

No compared to full training. It saves compute and infrastructure costs.

5. Is it suitable for startups?

Absolutely. It reduces development time and budget constraints.

6. Can it be used in crypto trading AI?

Yes, but market volatility and regulation must be considered.

7. Is transfer learning used in beauty tech?

Yes. It powers skin analysis and recommendation systems.

8. What tools support transfer learning?

TensorFlow, PyTorch, and Hugging Face libraries.

9. When should enterprises avoid it?

When proprietary large datasets exist or strict compliance is required.

10. Does it work for NLP and vision both?

Yes. It is widely used in both domains.